I am currently a tenure-track Assistant Professor leading VILA Lab at MBZUAI.

I was a postdoctoral researcher in Professor Marios Savvides and Professor Eric Xing's group (2019-2022).

My research interests span machine learning, computer vision, efficient deep learning, etc.

Prior to CMU, I was fortunate to be a joint-training Ph.D student (2017-2019) in IFP group/UIUC, advised by Prof. Thomas S. Huang.

1. Multiple positions are available, including PhD, master, visiting student, Postdoc, etc. Please send me your CV if you are interested in working with me at MBZUAI.

2. We also have a few joint postdoc positions with CMU (starting from 2025), please reach out if you are interested.

(For visiting students, currently I only accept those who either plan to pursue your PhD in my group or are already senior PhD students at other universities seeking a short/long-term visit. If you do not meet these criteria, it is no need to apply for visiting positions in my group. Also, I may not be able to respond to every inquiry, but I do go through all the emails I receive. Thanks for your understanding.)

Email: zhiqiangshen0214 AT gmail.com | Zhiqiang.Shen AT mbzuai.ac.ae

[Google Scholar] | [Github] | [Zhihu] |

Follow @szq0214

Research Interest

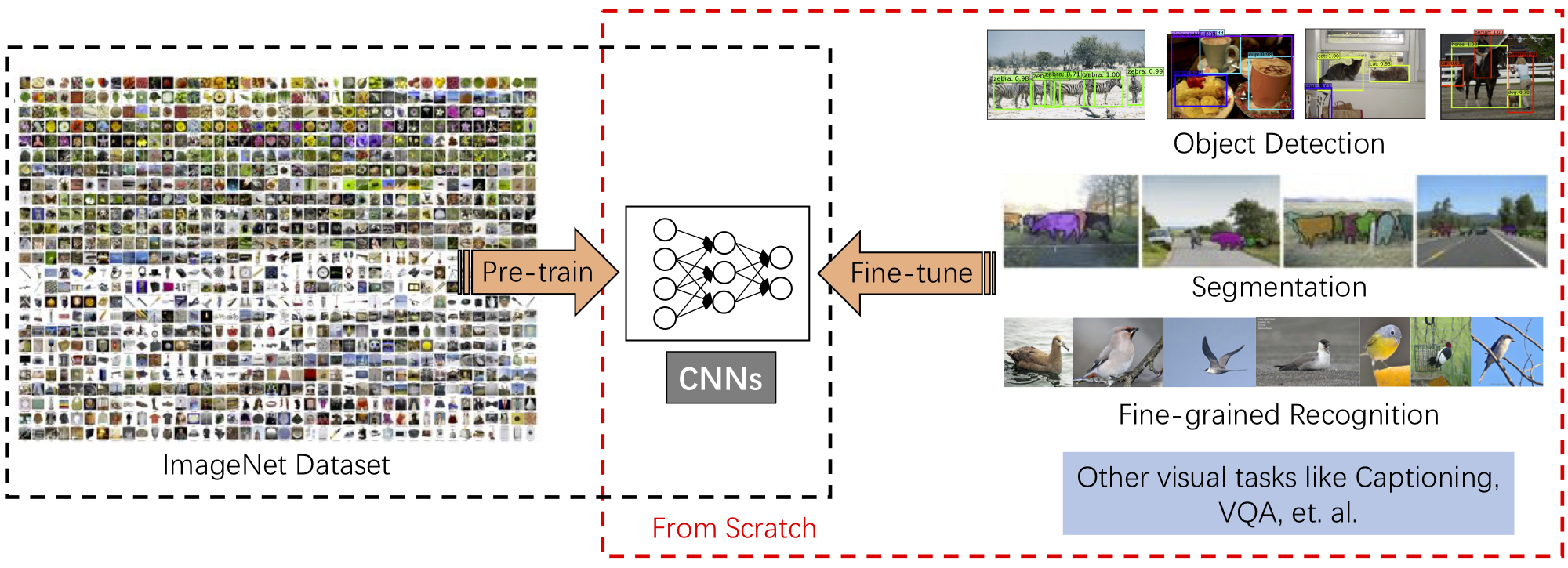

My research interests focus on the broad areas of efficient deep learning, machine learning, computer vision and natural language processing. Specifically, I am interested in deep learning methods for image recognition and object detection, efficient architecture design and parameter-efficient finetuning strategies, etc. Recently, I focus on

- Foundation Models in CV and NLP

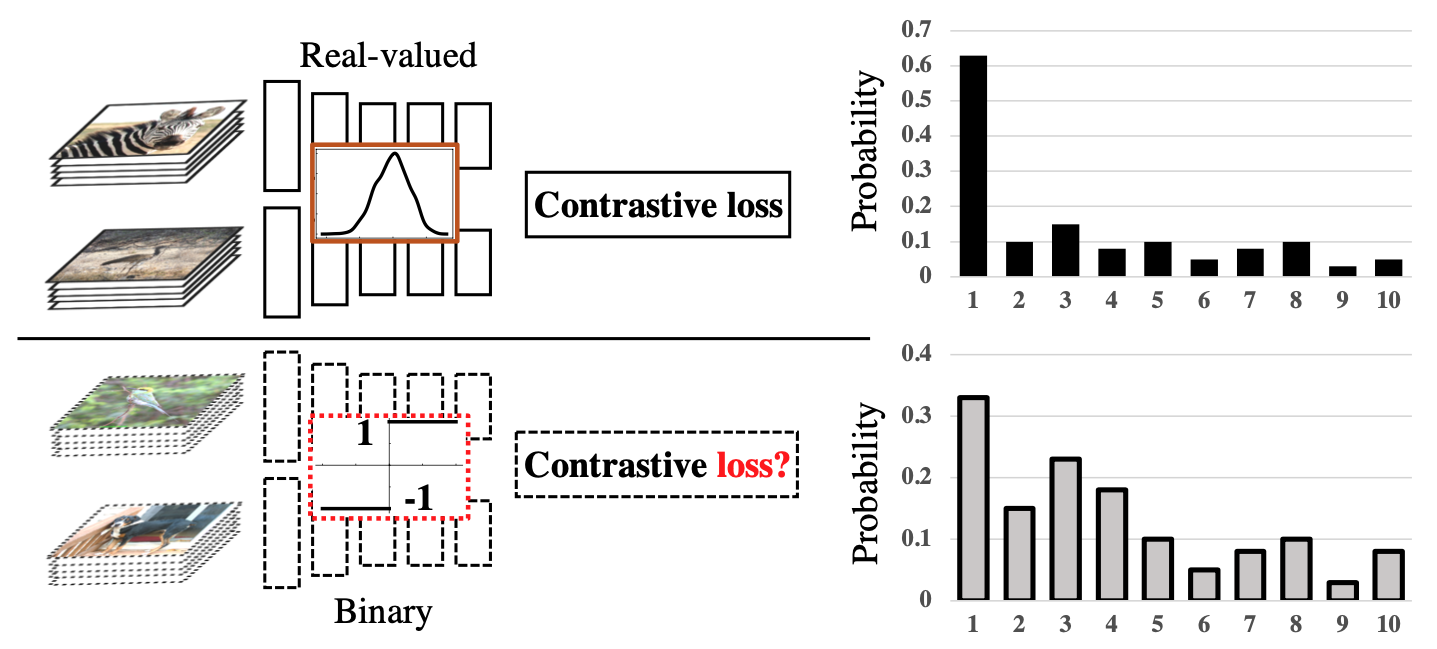

- Low-bit and Efficient Networks

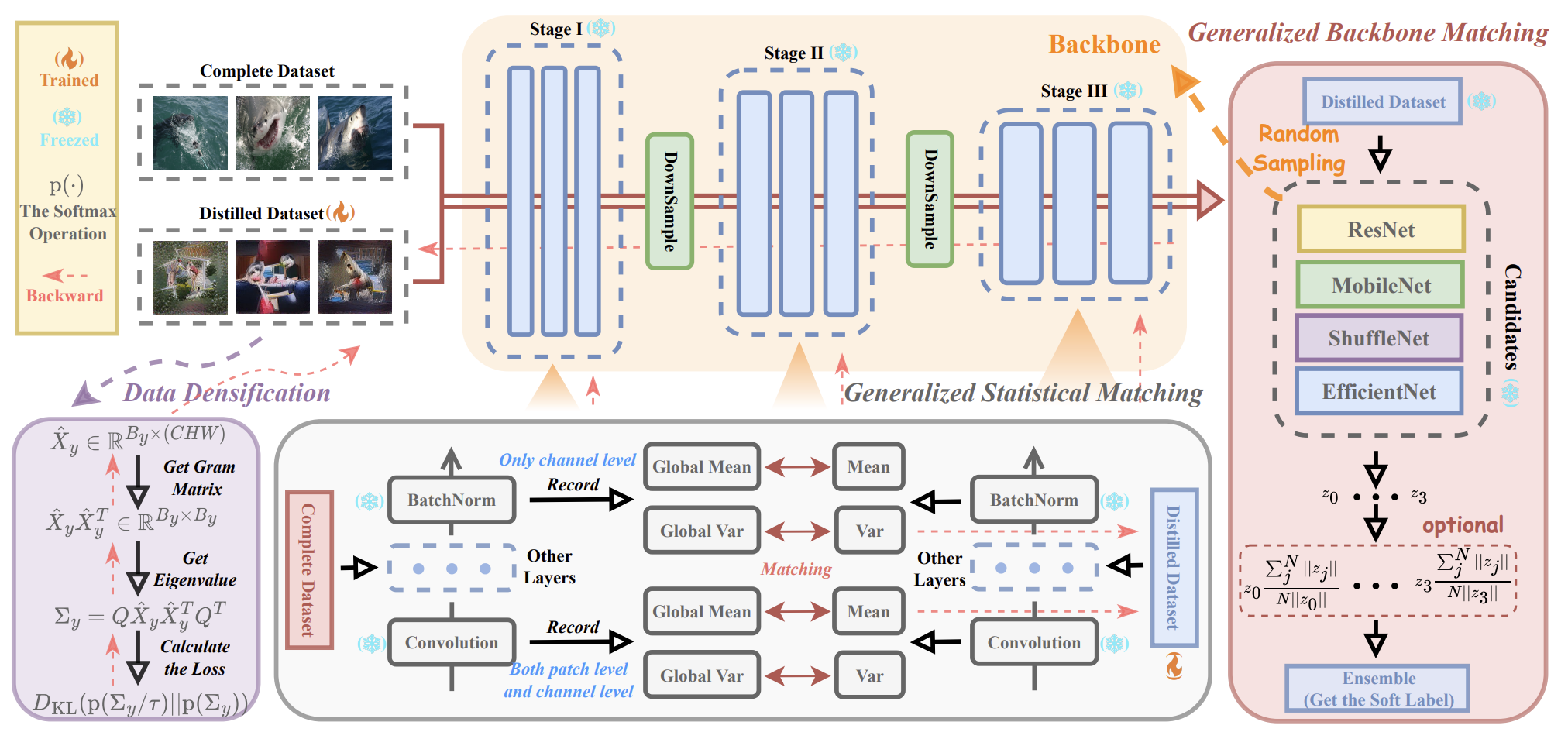

- Knowledge Distillation on Models and Datasets

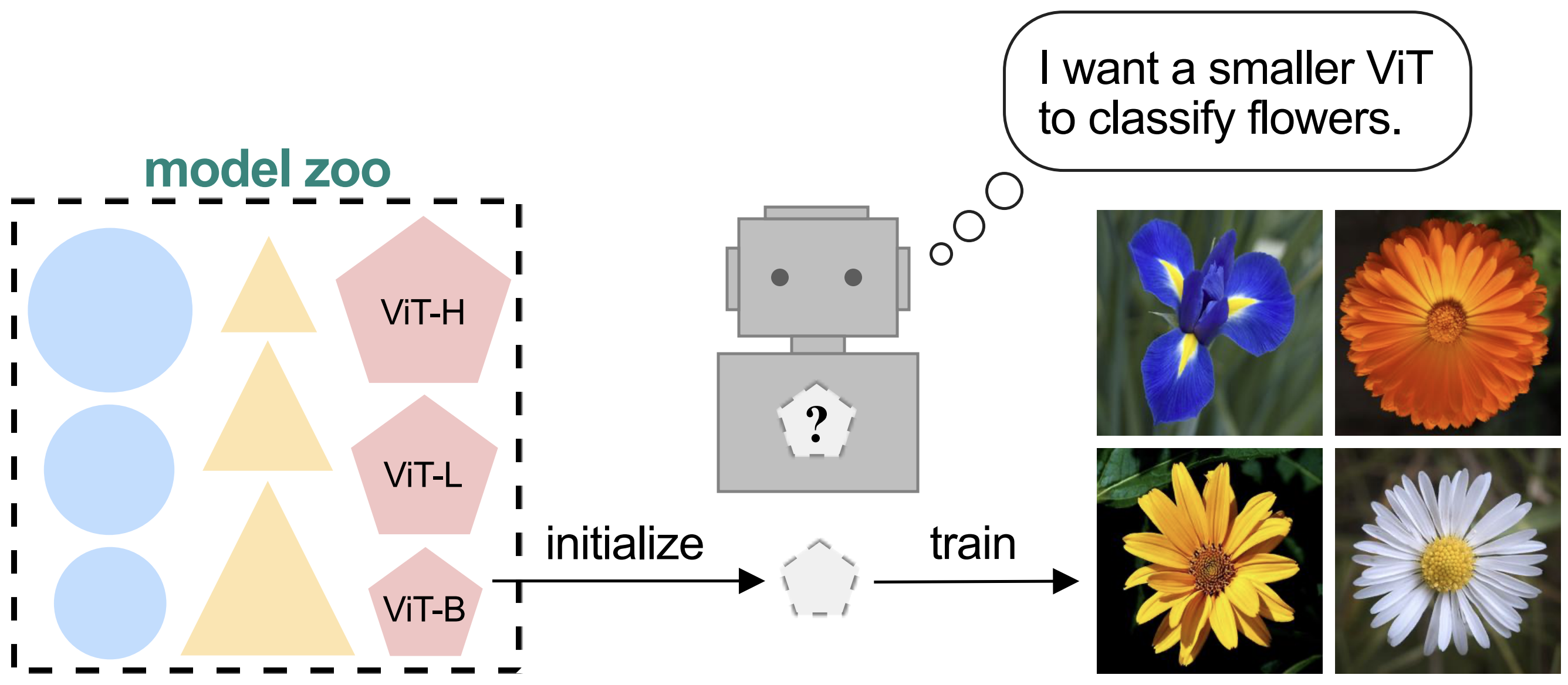

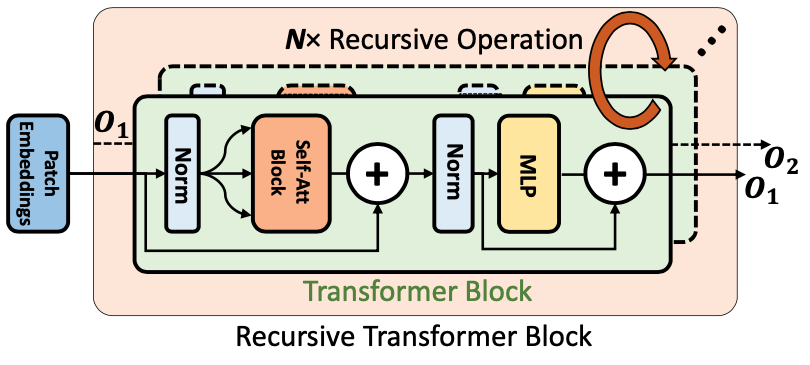

- Designing and Training Highly-efficient Network Architectures for CNNs and Transformers

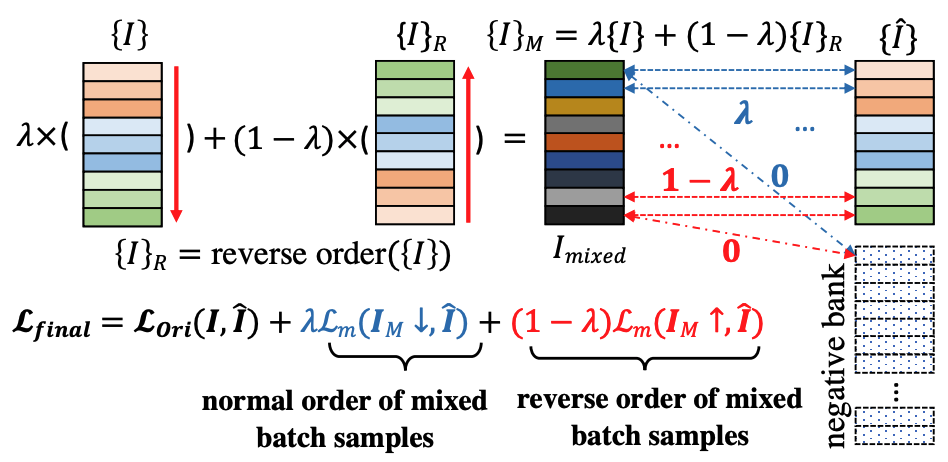

- Un(Self-)supervised / Weakly-supervised Learning

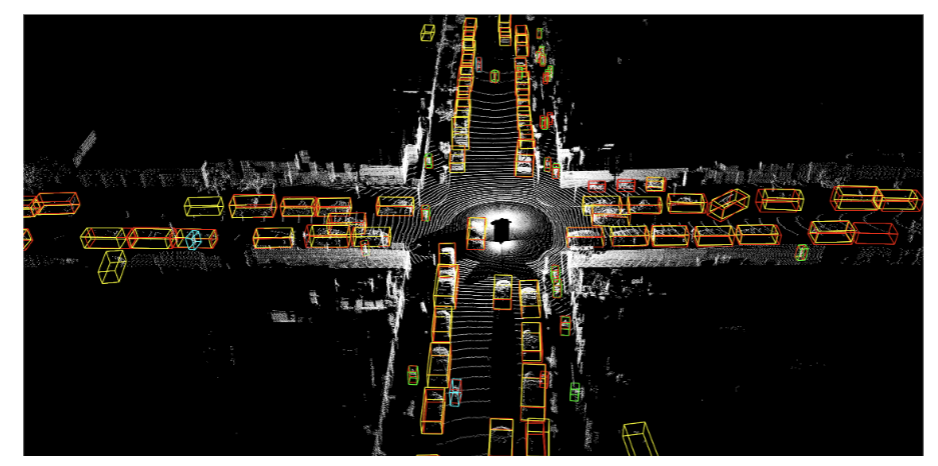

- Image Understanding, Including Object Detection, Captioning and Fine-grained Recognition

- Few-shot and Zero-shot Learning

Prospective Students: I am actively looking for self-motivated students (including graduate students and research assistants) who are interested in the areas of artificial intelligence, machine learning, VLM&LLM, deep generative networks, etc. I have several PhD/Master/RA openings starting in Fall 2025/2026 at MBZUAI. Please drop me an email with your CV if you are interested in joining.

1. MBZUAI-LLM Project (SlimPajama-DC, Mobile-MMLU, Bi-Mamba, LLM360, GBLM-Pruner): Huggingface

2. OptiML: Optimizing Efficiency in Machine Learning (GLoRA, Partial Transfer, ViT-Slim): Github

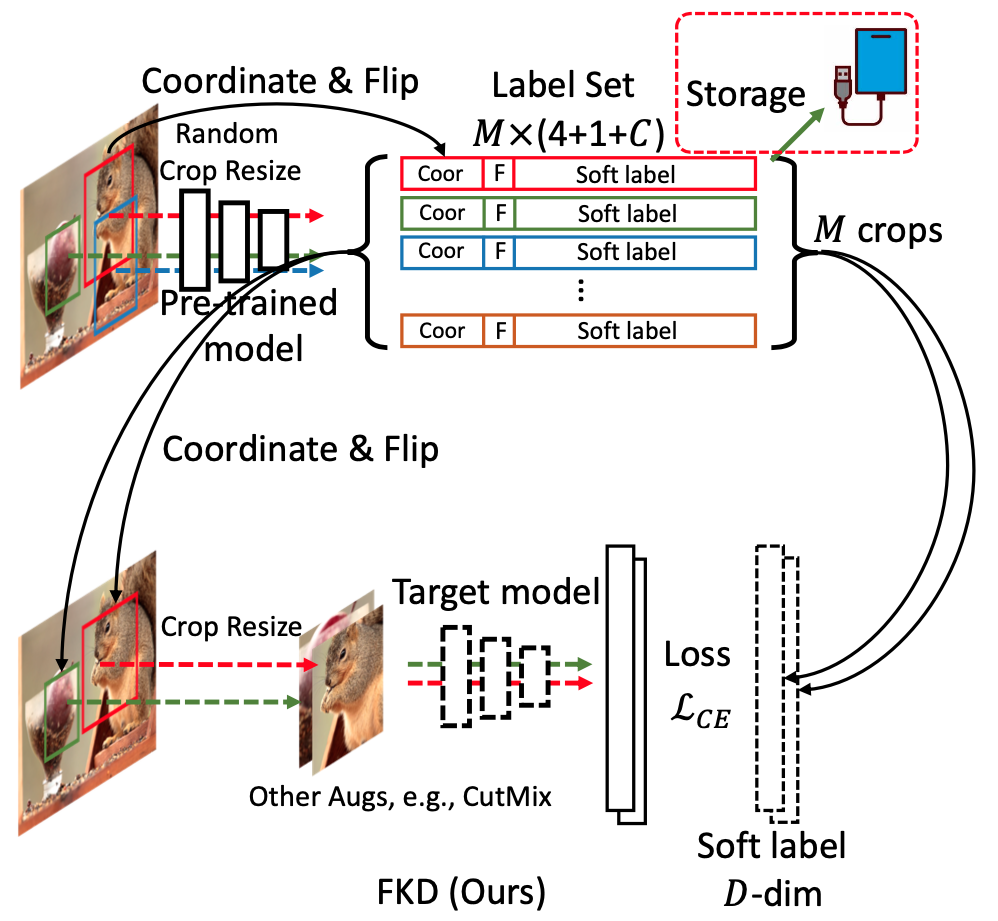

3. Evolving Knowledge Distillation: The Role of Pre-generated Soft Labels (FKD, FerKD, SRe2L): Github

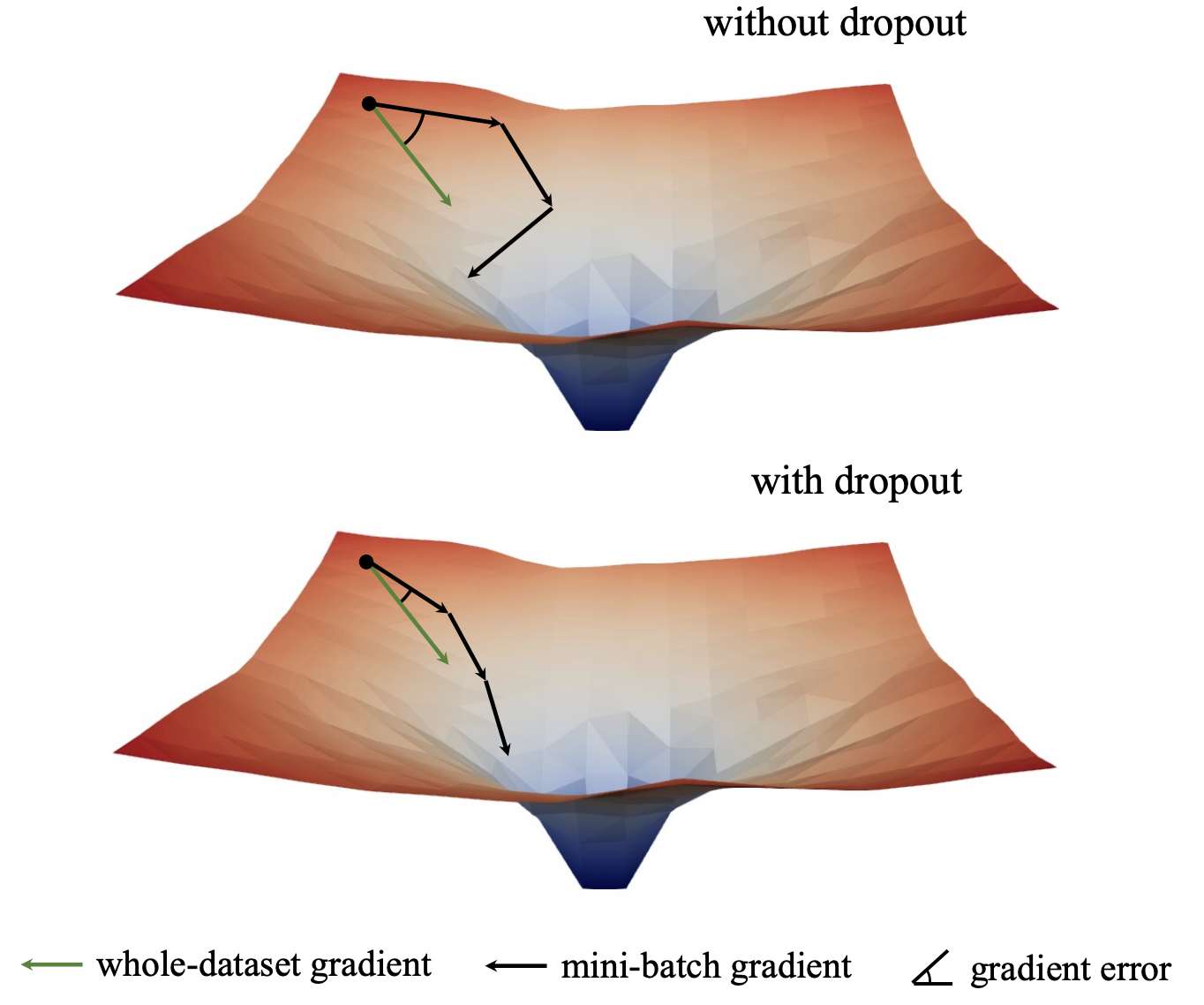

4. Pure Machine Learning Problems, such as: Dropout, Knowledge Distillation, BNN Optimization, etc.

...

News

- [Jun 26, 2025] Two papers are accepted in ICCV 2025.

- [May 16, 2025] Two papers are accepted in ACL 2025. One paper is accepted in KDD 2025.

- [Jan 23, 2025] Two papers are accepted in ICLR 2025. One paper is accepted in CVPR 2025. Congrats to Tianjun and Yaxin.

- [Dec 26, 2024] [New]: We have released Mobile-MMLU, a large-scale benchmark designed specifically for mobile scenarios. Please check it out if you are interested in Mobile Intelligence or Apple Intelligence.

- [Sep 28 & Oct 19, 2024] Two papers are accepted in TMLR 2024. Congrats to Xijie and Zeyuan.

- [Sep 25/26, 2024] Four papers (three in main conference and one in datasets and benchmarks track) are accepted in NeurIPS 2024. Congrats to Shitong, Liang, and Sukmin.

- [May 2, 2024] Two papers are accepted in ICML 2024. Congrats to Tianjun and Kirill.

- [Nov 10, 2023] I received Google Research Award Grant for Google-MBZUAI Program.

- [Mar 30, 2023] [Workshop & Competition]: We organized a workshop and two competitions (hosted on Kaggle platform) in ICCV'23 regarding "Resource-Efficient Deep Learning". More details are available at website and competition-1, competition-2.

- [Nov 28, 2022] [Talk]: I gave a talk at Fudan University to introduce recent works on Efficient Deep Learning. Thanks Prof. Zuxuan for the invitation.

- [Jul 12, 2022] New: I will serve as an SPC (Meta-Reviewer) in AAAI 2023.

- [Jan 19, 2022] [Talk]: I will give a talk at AI Drive to introduce our Un-Mix paper, thanks Haitao for the invitation.

- [Jan 19, 2022] [Talk]: I will give a talk at POSTECH (Pohang University of Science and Technology) to introduce our recent KD related works, thanks Prof. Sungsoo (Peter) for the invitation.

- [Jan 19, 2022] [Talk]: I will give a talk at UT Austin, thanks Tianlong for the invitation.

- [Jan 7, 2022] New: We have released a demo of Un-Mix, please check it out on Github!

- [Dec 13, 2021] [Talk]: I will give a talk/lecture on 2d3d.ai invited by Peter Naftaliev to systematically introduce our recent works on Knowledge Distillation. Please join us if you are interested in this topic. More details are available on meetup and reddit.

- [Dec 1, 2021] Two papers accepted to AAAI 2022.

- [Oct 31, 2021] One paper accepted to NeurIPS 2021, AI for Science workshop, one paper accepted to ICCV 2021 and one paper accepted to TIP 2021.

- [Jul 12, 2021] Our code and models for S2-BNN (Self-supervised Binary Neural Networks Using Distillation Loss, CVPR 2021) have been released on Github.

- [May 8, 2021] One paper accepted to ICML 2021 (regarding optimization on binary networks). Code and models are available on Github.

- [Mar 1, 2021] Three papers accepted to CVPR 2021.

- [Jan 12, 2021] One paper accepted to ICLR 2021. Our project page is here.

- [Dec 23, 2020] One paper accepted to AAAI 2021.

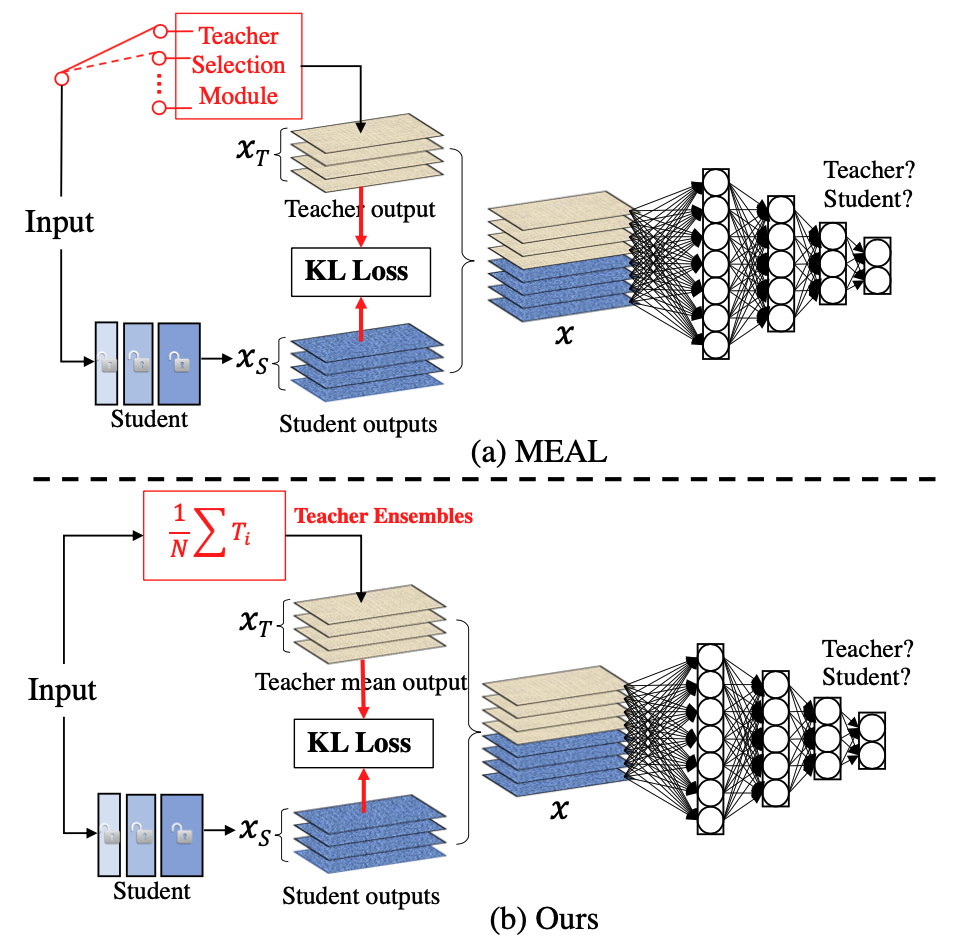

- [Nov 3, 2020] Short version of MEAL V2 has been accepted in NeurIPS 2020 Beyond BackPropagation: Novel Ideas for Training Neural Architectures workshop. Long version is coming soon.

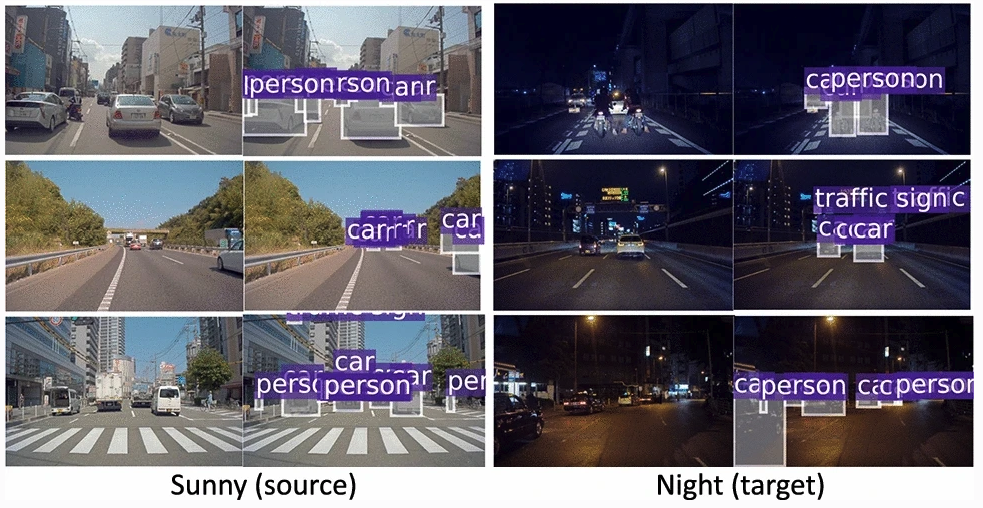

- [Oct 14, 2020] Our extended version of SCL (a domain adaptive object detection framework via gradient detach and stacked complementary losses) together with INIT has been accepted in IJCV 2020. Code and models are available on GitHub.

- [Sep 19, 2020] We released MEAL V2, the first approach that can boost vanilla ResNet-50 to 80%+ Top-1 accuracy on ImageNet without tricks. Our code and models are available on GitHub.

- [July 2, 2020] Three papers accepted to ECCV 2020.

- [June 14, 2020] One paper accepted to CVPR 2020.

- [July 14, 2019] INIT dataset has been released, please check out our Project Page.

- [June 5, 2019] DSOD V2 accepted to IEEE transactions on pattern analysis and machine intelligence (TPAMI). In this version, we have provided more ablation studies and some preliminary results on exploring the factors of training two-stage detectors from scratch.

- [Feb 26, 2019] One paper accepted to CVPR 2019.

- [Dec 21, 2018] One paper accepted to ICLR 2019.

- [Nov 1, 2018] Our paper MEAL: Multi-Model Ensemble via Adversarial Learning accepted in AAAI 2019 as Oral Presentation. Code and models are available at Github.

- [Sep 27, 2018] An extended version of DSOD is available on: arXiv.

- [July 29, 2018] One paper accepted to ECCV 2018.

- [Jan 12, 2018] I gave an invited talk at the Baidu IDL, Sunnyvale, CA, USA on the topic of learning object detectors from scratch. My talk involved our recent two papers DSOD and GRP-DSOD. Slides can be downloaded here (or Google Drive).

- [Dec 22, 2017] We released the code and model for MSR-VTT Challenge (Video Captioning) on Github.

- [Dec 04, 2017] Our new paper GRP-DSOD is available at: arXiv. Code and models are available at Github.

- Code and models for DSOD are available at: Github.

- Code and models for Network Slimming are available at: Github.

- Two papers accepted to ICCV 2017.

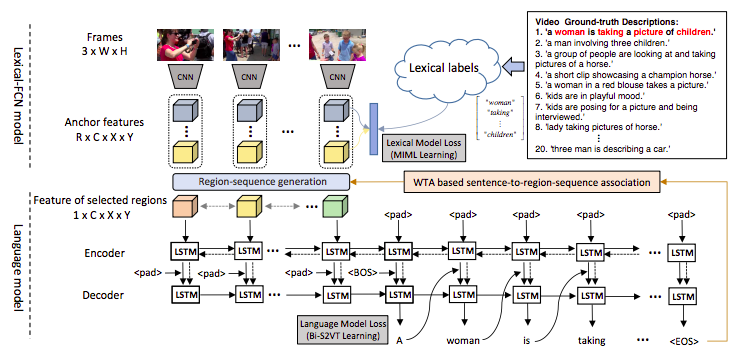

Two papers submitted to ICCV 2017.- Our paper "Weakly Supervised Dense Video Captioning" accepted to CVPR 2017.

- During my internship, our team won the 2016 Intel China Award (ICA), the highest award for team achievement in Intel China.

- 4th Place (Human Evaluation) and 5th Place (Automatic Evaluation Metrics) Winners at the MSR-VTT Challenge (Video Captioning). Code is here .

More News

Recent & Selected Publications (Full List)

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

||

|

|

Academic Activities

- Meta-Reviewer (SPC): AAAI 2023, 2024.

- Conference reviewer: ICLR 2023, NeurIPS 2022, ICML 2022, ICLR 2022, ECCV 2022, CVPR 2022, NeurIPS 2021, ICML 2021, CVPR 2021, AAAI 2021, WACV 2021, NeurIPS 2020, ECCV 2020, BMVC 2020, IJCAI 2020, CVPR 2020, AAAI 2020, ICCV 2019, CVPR 2019, AAAI 2019, CVPR 2018, ACCV 2018, NIPS 2016.

- Journal reviewer: TPAMI, IJCV, TMLR, TIP, TMM, JVCI, etc.

Awards and Honors

- CVPR 2019 Doctoral Consortium travel award. Mentor: Prof. Trevor Darrell.

- ICLR 2019 travel award, 2019

- AAAI 2019 student scholarship award, 2018

- ICCV 2017 student volunteer, 2017

- Huawei scholarship, 2017

- During my internship, our team won the 2016 Intel China Award (ICA), the highest award for team achievement in Intel China, 2016

- Tung OOCL scholarship, 2015

- Special Grade Scholarship, 2013

- University-level Outstanding Students, 2013

Competitions

- iMaterialist Challenge on Product Recognition (Fine-grained image classification of products at FGVC6, CVPR'19 workshop): ranked 4th globally (Team leader).

- MSR-VTT Challenge (video captioning): ranked 4th in human evaluation and ranked 5th in the automatic evaluation metrics (Team leader), 2016

- Top 10% in Kaggle Competition of Right Whale Recognition, 2016

- Second Prize in DataCastle Competition of the Verification Code Recognition, 2016

- Second Prize (National-level) in China Graduate Student Mathematical Contest in Modeling, 2015

- MCM/ICM -- Honorable Mention, 2012

- First Prize (National-level) in Electrical Engineering Mathematical Contest in Modeling, 2012

- First Prize (National-level) in China Undergraduate Mathematical Contest in Modeling, 2011 (大学生数学建模竞赛全国一等奖)

- Second Prize of Jiangsu High School Physics Competition (江苏省高中物理竞赛二等奖)

Teaching Assistant

- 2015.9- 2016.1, Fudan University, COMP120008.02, C++ language programming